Bioinfo

Thèmes de recherche

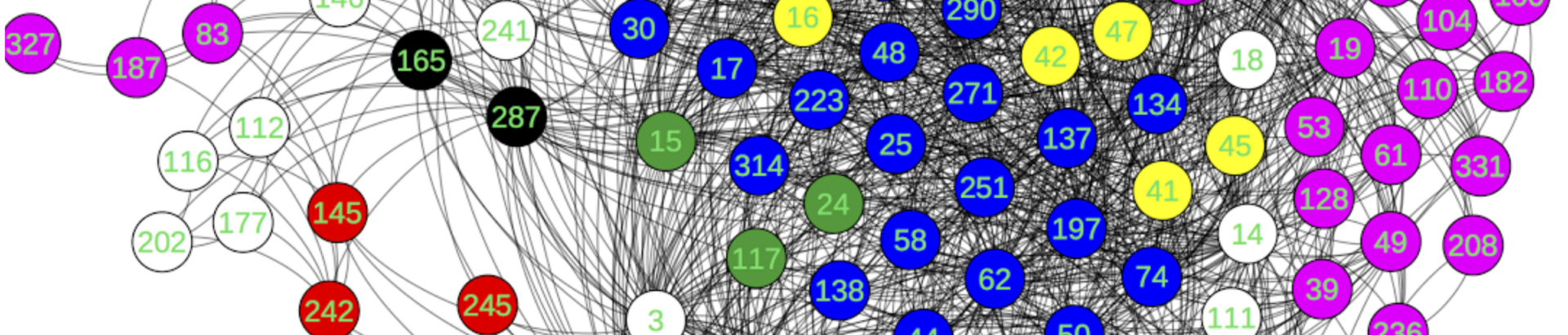

Biological and molecular networks

In this theme, we develop new computer science methods for modelling, design, and analysis of biological and molecular networks. This includes metabolic networks, signalling networks, regulation networks, but also molecular structures as we model them by graphical networks. The theme is organized in three axes described below.

RNA structural bioinformatics

We aim to develop combinatorial and graph-based approaches for solving key problems in structural bioinformatics. We notably develop new approaches for analysis and prediction of RNA three-dimensional (3D) structures. Our main long-term objective is to better understand how RNA folds, and to develop data-driven algorithmic approaches able to predict the structure of RNA molecules. Our works on fine-tuned analysis of recurrent interaction motifs, give us a solid basis to go further towards this objective.

Keywords: RNA 3D structure, graph algorithms.

Main investigators : Alain Denise

Main software : CaRNAval, VARNA, GenRGenS, Cartaj, Rna3Dmotif, GARN

Synthetic biology

The purpose of our project in synthetic biology is to design artificial micro-reactors, non living bio-machines (NLBs), that can sense simultaneously specific (ranges of) concentrations of biomarkers of a target pathology, implement a diagnosis decision tree (boolean expression) and give a positive answer, for example by synthetizing a colored compound. Thus we develop algorithms and software tools to help the design of NLBs.

Keywords: bio-machines, boolean networks, diagnosis.

Main investigator: Patrick Amar

Main software: Skillfinder, NetGate, NetBuild, HSIM

Systems biology

Our main contributions in systems biology include logic programming and abstract interpretation for capturing qualitative dynamics of signalling and gene networks, constraint based analysis of fluxes in metabolic networks, algorithms for identifying patterns of reactions in metabolic networks.

Keywords: model abduction, logic programming; stochastic processes ; fluxes analysis.

Main software : CoMetGene

Population genetics and molecular evolution

The team has a strong interest in evolutionary analyses from genomic data at multiple levels: gene, genome, population, and species. Our research is organized into three interconnected themes.

We focus on analyzing modern or ancient DNA samples across one or more populations or closely related species to unravel their demographic history, and detect adaptive signals at the gene level. We place particular emphasis on improving evolutionary models/simulators by integrating understudied biological processes and non-genetic information, such as cultural, geographical, and archaeological factors. These elements are not only essential for controlling confounding effects on evolutionary inference but are also interesting in their own right. At the gene and genome levels, we investigate molecular-scale processes and how evolutionary forces create and maintain complex genomic features.

Paleogenomics (Ancient DNA)

The availability of ancient DNA, extracted from fossils or old bones, is expanding rapidly. The team is developing specialized tools and testing traditional ones to analyze ancient DNA, addressing the characteristics that differentiate it from modern DNA. Through numerous collaborations, we have developed models for analyzing paleogenomic samples (in terms of structure, admixture, or relatedness), simulators of ancient DNA damage and bacterial evolution, and apply new or existing tools to study ancient human populations and to unravel evolutionary changes in human and pathogen populations in Mexico.

Keywords: heterogeneous data, DNA damage, simulation, factor model, benchmarking, robustness

Main investigators: Flora Jay

Main software: TFA (with TIMC), BactSLiMulator (also integrated into SLiM),

Other: aDNA robustness pipeline, Paleogenomic-Datasim (with U Brown, UNAM), GRUPS-rs (with MNHN), older GRUPS version (with UC Berkeley)

Demographic and Cultural Inferences

We develop realistic simulators, along with machine learning and deep learning models for evolutionary inference, advancing beyond methods that rely solely on summary statistics. Our research focuses on reconstructing past demographic history (population size changes, migrations, …) and on understanding the impact of cultural processes on genetic evolution. Additionally, we enhance the characterization of contemporary genomic diversity using generative neural networks to create proxy genomic datasets. In the long run, they could augment public databases.

Keywords: simulation-based inference, Approximate Bayesian computation, deep neural networks, generative neural networks, simulation

Main investigators: Flora Jay, Fanny Pouyet

Main software: dnadna, artificial_genomes, SML demographic inference

Other: dlpopsize, DemoSEQ

Molecular Evolution

We investigate processes such as natural selection and meiotic recombination, and evaluate tools for reconstructing the evolutionary history of species’ genomes such as of humans and yeast. Additionally, we explore the influence of mating systems at a broader evolutionary scale using theoretical and simulation tools. At the molecular level, we examine how non-adaptive evolutionary forces shape genomic features and how complexity can emerge and be sustained over time.

Keywords: simulation, agent-based model, coalescent theory, summary statistics, GC content, genetic linkage, recombination

Main investigators: Vincent Liard, Fanny Pouyet

Main software: Aevol

Biological and biomedical data integration and analysis

Scientific workflows

Bioinformatics experiments are increasingly complex, involving very large sets of interrelated tools and making use of huge data sets. Scientific workflow management systems have been designed to help users design and execute their experiments. Supporting scientists in designing reproducible and reusable workflows and managing the execution of such large-scale experiments is of paramount importance to enhance new discoveries in Biology. Our areas of interest include optimizing workflows (e.g., removing unnecessary steps), designing FAIR workflows, querying workflow repositories (e.g., retrieving similar workflows), and managing provenance information (e.g., understanding differences between two executions). Our approaches make use of data science and graph-based techniques.

Keywords: workflow similarities, FAIR workflows, provenance, databases, data science

Main investigators: Sarah Cohen-Boulakia

Main software: BioFlow-Insight

Biological data ranking

Another challenge is to help scientists face the deluge of data available. The problem is actually two folds: the number of biological databases available is increasing over time and the number of answers returned by even one database may be too large to be dealt with. A first series of approaches we propose aim at guiding users in the maze of biological databases while considering userpreferences in the kind of resources to be queried. As for ranking biological data, the main difficulty lies in taking into account various ranking criteria and combine them. Our originality lies in considering rank aggregation (a.k.a. median ranking). Our approaches make use of database and combinatory techniques.

Keywords: database, consensus ranking, combinatorics

Main investigators: Sarah Cohen-Boulakia, Alain Denise, Adeline Pierrot

Main software: ConQuR-Bio

Biological data integration

More generally, the group is interested in designing new methods to integrate biological data. Latest projects include the integration of all the clinical trials coordinated by WHO on Covid-19: Covid-NMA, https://covid-nma.com

Main investigators: Sarah Cohen-Boulakia